The video shows that X-Trainer used an imitation learning neural network+visual big language model, trained for 2 hours, and gained the ability to independently brush dishes, saving 70% of training time compared to the common training time.

From the plates with red food residues, sponges placed on yellow plates, and metal racks hanging behind the plates, deduce the task of cleaning and storing the plates in the metal racks.

Wipe three times without leaving any residual stains.

When the robot finished washing the dishes and was about to put them in the tray rack, it was suddenly interfered with by humans to dirty the dishes again. However, the robot quickly caught this change and immediately responded.

After the video was released, it sparked heated discussions among netizens and they are looking forward to the era of robots doing household chores!

Popular reviews from netizens

Some even joke that if humans keep causing trouble, will robots continue to brush and will they go on strike!

In fact, X-Trainer integrates the cutting-edge technologies of intelligent robots and AI, enabling robots to quickly imitate and learn complex human actions, ultimately achieving behavior cloning.

Lang Xulin, co-founder of Yuejiang Technology, stated that the series of actions of the X-Trainer in the video are derived from the end-to-end control of the imitation learning neural network, which is completely autonomous after training. The stability and speed of the robot have been significantly improved. The entire scheme adopts a visual big language model and an imitation learning neural network.

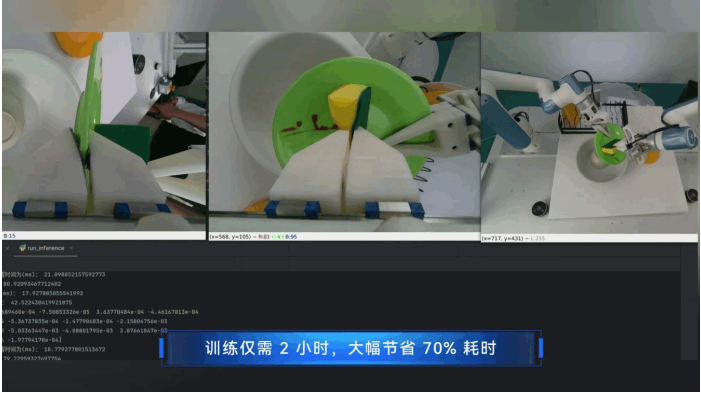

Firstly, the robot camera inputs the top image into the visual language model, and X-Trainer can complete the following tasks:

- Description of work scene [including dishes stained with food residue, sponges placed on yellow plates, and an iron frame behind the dishes, creating a kitchen scene]

- The visual model has implemented reasoning for tasks, such as cleaning dishes with food residue, placing sponges on yellow dishes, and placing metal racks behind dishes=cleaning dishes and storing them in metal racks

Regarding the operation of both arms, all actions are driven by an end-to-end neural network. The 25Hz frequency receives images from three cameras on the top and hands and completes inference. The 250Hz arm motion is generated through a high-performance online motion planning interface (according to public information, the network frequency for receiving airborne images in Figure 01 is 10Hz). The X-Trainer’s 25Hz end-to-end high-performance motion interface improves response speed by 150%, further improving the smoothness of the robot’s operation.

In January 2024, Figure 01 showed a video of making coffee and stated that the robot practiced these movements in an end-to-end manner, with a training time of 10 hours for the neural network. X-Trainer can autonomously brush dishes and quickly correct real-time interference in just 2 hours of training through human demonstration learning.

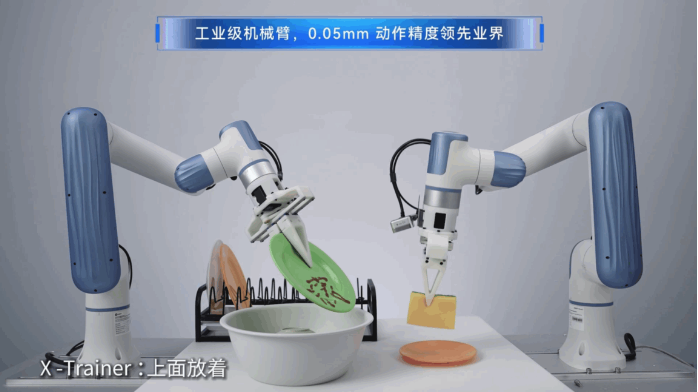

The high-speed training of X-Trainer benefits from the 0.05mm high-precision dual arms, which enable AI training robots to have industrial level data collection and action accuracy, greatly improving the efficiency and quality of completing tasks, and obtaining high-quality datasets for rapid training. This robotic arm is widely used in 3C manufacturing, commercial coffee shops, medical moxibustion and other fields as an industrial level robotic arm, which ensures the landing of the training scene.

Finally, Lang Xulin stated that the imitation learning neural network, end-to-end image to action mapping, training speed and quality are both rapidly developing and improving. Both Tesla and Figure demonstrate relevant technological achievements, with X-Trainer and X representing infinite training possibilities. The release of this training platform aims to help the development of embodied intelligence in China and provide a high-performance carrier for the landing of the artificial intelligence industry.

Embodied intelligence refers to an intelligent system based on the physical body for perception and action. It obtains information, understands problems, makes decisions, and implements actions through the interaction between intelligent agents and the environment, thereby generating intelligent behavior and adaptability. It is a key carrier for AI to achieve physical world interaction.

Collaborative robots are an important hardware carrier for embodied intelligence, unleashing even greater market space from industry to commerce.

Yuejiang Technology has deployed over 70000 robots globally, covering 100 countries and regions with product services, serving dozens of Fortune Global 500 companies such as Lixun Precision, BYD, Foxconn, Huawei, Toyota, Volkswagen, etc. Its export volume has been the highest for five consecutive years, and it has a rich foundation in embodied intelligence applications and landing scenarios.

Yuejiang Technology has always been committed to breakthroughs in AI+robotics technology and industrial landing. It has been rated by CB Insights as one of the 80 most valuable robotics enterprises in the world, and has established cooperative relationships with numerous artificial intelligence universities around the world, including Oxford University, Carnegie Mellon University, Massachusetts Institute of Technology, and Waseda University. It has taken the lead in undertaking the Guangdong Province Key Field R&D Program for Artificial Intelligence, which focuses on “autonomous learning of complex skills for multi degree of freedom intelligent agents, key components, and 3C manufacturing demonstration applications”. At the same time, as a national level specialized and innovative “little giant” enterprise, Yuejiang took the lead in undertaking the national key research and development plan for intelligent robots in 2022, and has applied for more than 1200 intellectual property rights. It has been recognized as a national advantage intellectual property enterprise, forming a targeted patent group layout in collaboration and core components of humanoid robots, electronic skins, remote operations, imitation learning, and other directions.

Warehouse special offer product recommendation:

PFSA103B 3BSE002487R1

PFSA103B PFSA103B STU

PFSA145APPR.3KVA PFSA145

PFSA145APPR.3KVA 3BSE008843R1

PFSA103D

PFSA103D 3BSE002492R0001

PFSA103C

PFSA103C STU3BSE002488R1

PFSA140

PFSA140 3BSE006503R1

More……

Enjoyed examining this, very good stuff, thanks. “Whenever you want to marry someone, go have lunch with his ex-wife.” by Francis William Bourdillon.

I like what you guys are up too. Such clever work and reporting! Keep up the excellent works guys I’ve incorporated you guys to my blogroll. I think it will improve the value of my site :).

My partner and I stumbled over here by a different website and thought I should check things out. I like what I see so now i am following you. Look forward to going over your web page for a second time.

We stumbled over here different page and thought I might check things out. I like what I see so i am just following you. Look forward to checking out your web page for a second time.

Thank you for your comment, if you need to know more. Please refer to https://www.weikunfadacai1.com/product/ppd517-abb-excitation-system-central-processing-module-ppd517/

Address: Lvling Road, Siming District, Xiamen City, Fujian Province

QQ email: xiongbacarrey@qq.com

Industrial Control Sales Consultant: Carrey

WhatsApp:+86 18030177759

Skype ID:live:Cid Ca9e00ef3ecc00c4

Official website: https://www.weikunfadacai1.com/

Greetings&Thank You

Thank you for your comment, if you need to know more. Please refer to https://www.weikunfadacai1.com/product/3bse002487r1-signal-conditioning-board-3bse002487r1/

Address: Lvling Road, Siming District, Xiamen City, Fujian Province

QQ email: xiongbacarrey@qq.com

Industrial Control Sales Consultant: Carrey

WhatsApp:+86 18030177759

Skype ID:live:Cid Ca9e00ef3ecc00c4

Official website: https://www.weikunfadacai1.com/

Greetings&Thank You

Whats up very nice web site!! Guy .. Beautiful .. Wonderful .. I will bookmark your website and take the feeds additionally…I’m happy to search out a lot of useful information here in the post, we’d like work out more strategies on this regard, thanks for sharing.

Good morning, dear friend! May your day be filled with sunshine and laughter, with each step taken firmly and powerfully. Wishing you all your wishes come true, all the best, good morning and good luck!

Thank you for your recognition. I will work even harder. If you need to learn more, please visit:

https://www.weikunfadacai1.com/product/gfd233a101-gfd233a101-gfd233a-abb-interface-module/

Usually I do not read post on blogs, but I wish to say that this write-up very forced me to take a look at and do so! Your writing taste has been surprised me. Thanks, quite great post.

Dear friend, thank you for your recognition. If you need to learn more, please visit:

https://www.weikunfadacai1.com/product/ppd513aoc-100440-3bhe039724r0c3d-ac800pec-system-excitation-controller-ppd513aoc-100440/

Great post. I was checking constantly this blog and I am impressed! Very helpful info specifically the last part 🙂 I care for such info a lot. I was looking for this certain information for a very long time. Thank you and best of luck.

Dear friend, thank you for your comment. I will work harder and create new high-quality content articles. If you need to learn more, please visit:

https://www.weikunfadacai1.com/product-tag/control-circuit/

https://www.weikunfadacai1.com/product-tag/excitation-control-module/

https://www.weikunfadacai1.com/product-tag/excitation-system-controller/

https://www.weikunfadacai1.com/product-tag/communication-module/

https://www.weikunfadacai1.com/product/ppd113b03-26-100100-3bhe023584r2625-2/

hello!,I like your writing so much! share we communicate more about your article on AOL? I need a specialist on this area to solve my problem. Maybe that’s you! Looking forward to see you.

Hi my family member! I wish to say that this post is awesome, great written and include approximately all significant infos. I would like to look extra posts like this .

I was recommended this website by my cousin. I am not certain whether this post is written by way of him as no one else recognise such detailed approximately my problem. You’re incredible! Thanks!

Does your website have a contact page? I’m having trouble locating it but, I’d like to shoot you an email. I’ve got some creative ideas for your blog you might be interested in hearing. Either way, great blog and I look forward to seeing it develop over time.

This is really interesting, You are a very skilled blogger. I’ve joined your rss feed and look forward to seeking more of your excellent post. Also, I’ve shared your site in my social networks!

you may have a terrific weblog here! would you prefer to make some invite posts on my blog?

Hiya very cool site!! Guy .. Beautiful .. Amazing .. I’ll bookmark your web site and take the feeds also…I am glad to search out numerous helpful info here within the post, we want develop more strategies on this regard, thank you for sharing.

I like this weblog very much, Its a really nice office to read and receive information. “Oregano is the spice of life.” by Henry J. Tillman.

Wonderful items from you, man. I have take note your stuff prior to and you are simply too magnificent. I actually like what you have obtained right here, certainly like what you’re saying and the best way during which you say it. You are making it enjoyable and you still take care of to keep it smart. I can’t wait to learn much more from you. That is actually a tremendous site.

Those are yours alright! . We at least need to get these people stealing images to start blogging! They probably just did a image search and grabbed them. They look good though!

I am impressed with this site, very I am a fan.

You have remarked very interesting points! ps nice web site.

You completed several good points there. I did a search on the topic and found most persons will have the same opinion with your blog.

Hi there! I’m at work surfing around your blog from my new iphone 4! Just wanted to say I love reading your blog and look forward to all your posts! Keep up the great work!

Somebody essentially help to make seriously posts I would state. This is the very first time I frequented your web page and thus far? I surprised with the research you made to make this particular publish amazing. Excellent job!

Hello! I just would like to give a huge thumbs up for the great info you have here on this post. I will be coming back to your blog for more soon.

I was examining some of your content on this site and I think this website is very instructive! Continue posting.

I’ve been browsing online more than 3 hours today, yet I never found any interesting article like yours. It’s pretty worth enough for me. Personally, if all site owners and bloggers made good content as you did, the net will be a lot more useful than ever before.